Significance

Keypoints

- Propose a framework for compression of point cloud data based on octree structure

- Demonstrate performance of proposed method on static and dynamic point cloud compression

Review

Background

A point in the 3-dimensional space can be represented by three real numbers, the coordinates of x-, y-, and z-axis. It might seem that the size of the data is small, but that is not quite true from the digital point of view. Assuming that each coordinate is represented by a single-precision floating point data, it requires 3$\times$32 bytes to store a point. Point cloud refers to a set of these points in the 3D space, and the computational burden is heavily increased when dealing with point cloud data with high spatial and temporal resolution.

An octree is an alternative representation of a point in a binary tree style. Based on the property of an octree which is binary and sparse at the same time, octree based methods have been proposed to compress point cloud data. However, octree based methods usually suffer from loss of precision when compared to voxel-based methods. The authors propose a method which can recover point cloud coordinates from an octree compressed data with two separate neural networks.

Keypoints

Propose a framework for compression of point cloud data based on octree structure

The key idea of the proposed method comes from not the neural network, but how the data prior for reconstruction is defined.

The authors propose to reconstruct voxel coordinate from an octree structure which preserves neighboring node information at the same level denoted as $V_{i}$.

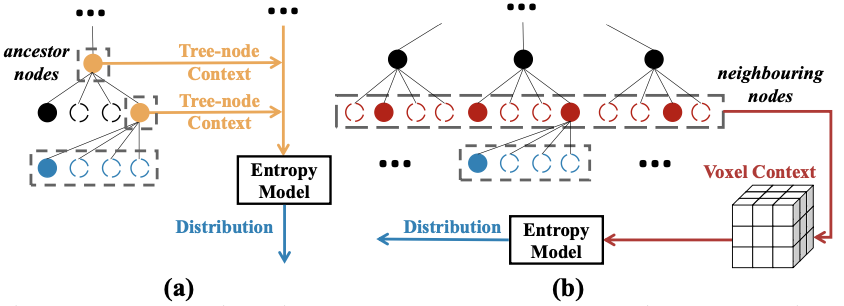

Reconstruction prior of (a) previous octree methods (b) proposed method

A sparse binary voxel context of a same octree level (red circles on (b) of the above figure) is input to the neural network and learns to reconstruct 8-bit binary octree of the next level (blue circles).

Rich prior information including the neighborhood information allows the neural network to reconstruct a more precise coordinate of each points.

The reconstructed coordinate is further refined by a separate neural network which predicts the offset of each points with the same data prior.

Reconstruction prior of (a) previous octree methods (b) proposed method

A sparse binary voxel context of a same octree level (red circles on (b) of the above figure) is input to the neural network and learns to reconstruct 8-bit binary octree of the next level (blue circles).

Rich prior information including the neighborhood information allows the neural network to reconstruct a more precise coordinate of each points.

The reconstructed coordinate is further refined by a separate neural network which predicts the offset of each points with the same data prior.

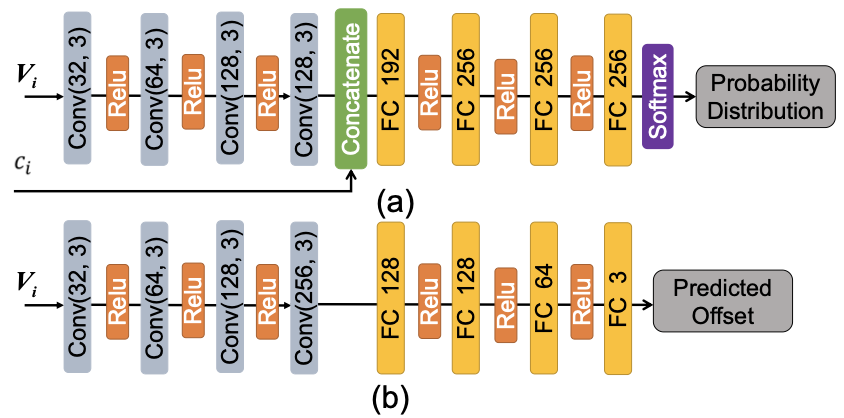

Probability of next octree level is obtained by a neural network (a), and then refined by adding the predicted offset with another neural network (b)

Probability of next octree level is obtained by a neural network (a), and then refined by adding the predicted offset with another neural network (b)

Generalization to the dynamic point cloud data is also provided in the paper.

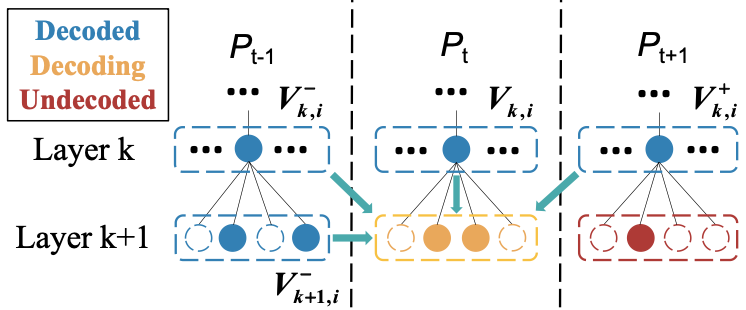

Extension to dynamic point cloud compression

To reconstruct a point $P_{t}$ at time $t$, four prior information is used in the dynamic case (blue circles on the above figure).

These four prior information include current layer information of the octree at time $t-1$, $t+1$, and $t$ along with next layer information of the octree at time $t-1$.

The performance of both static and dynamic point cloud compression are demonstrated by experiments.

Extension to dynamic point cloud compression

To reconstruct a point $P_{t}$ at time $t$, four prior information is used in the dynamic case (blue circles on the above figure).

These four prior information include current layer information of the octree at time $t-1$, $t+1$, and $t$ along with next layer information of the octree at time $t-1$.

The performance of both static and dynamic point cloud compression are demonstrated by experiments.

Demonstrate performance of proposed method on static and dynamic point cloud compression

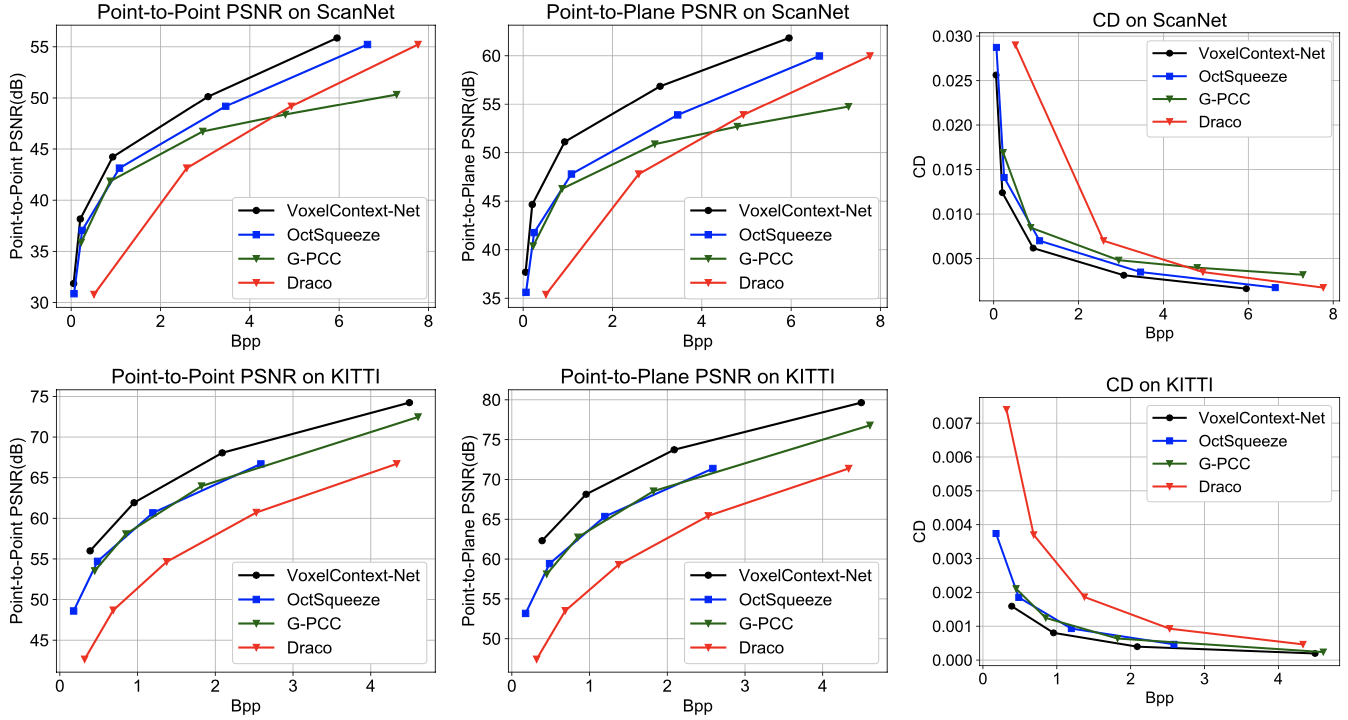

The proposed method VoxelContext-Net is compared with baseline point cloud compression methods including G-PCC, Draco, OctSqueeze on datasets ScanNet and Semantic KITTI.

It can be seen that the VoxelContext-Net outperforms other baseline methods in terms of both reconstruction quality and the compression rate.

Static point cloud reconstruction quality of the proposed method

Static point cloud reconstruction quality of the proposed method

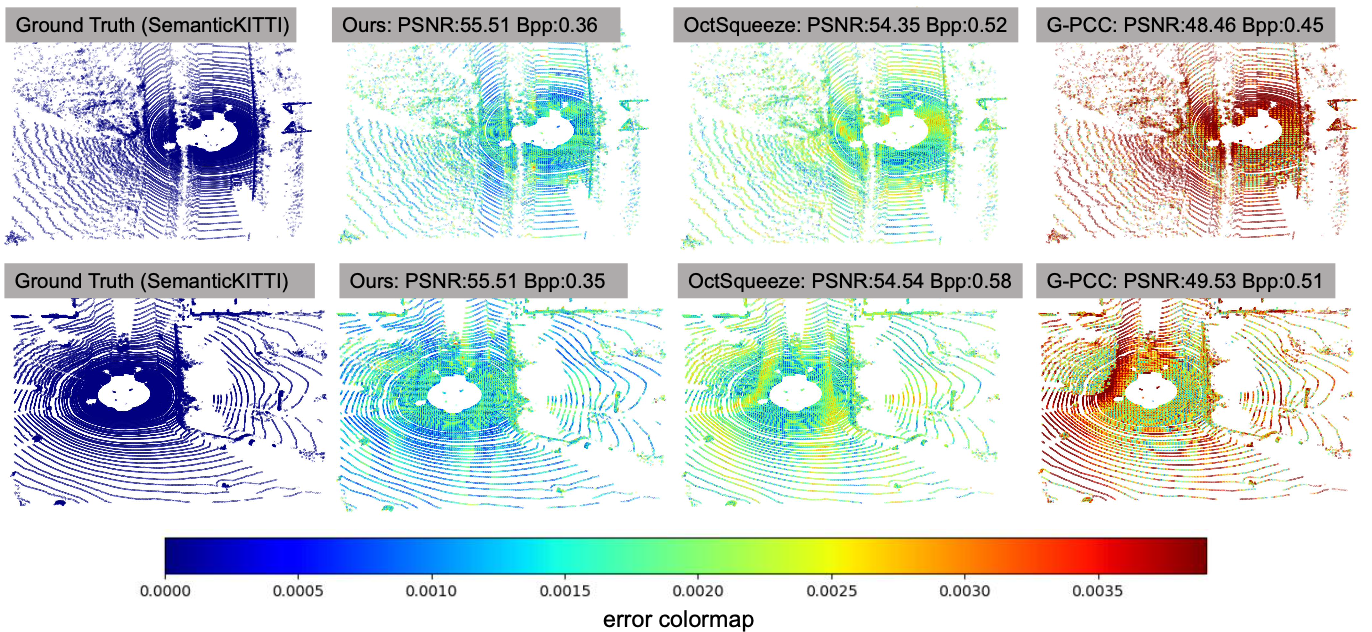

Qualitative demonstration of the static reconstruction quality

Qualitative demonstration of the static reconstruction quality

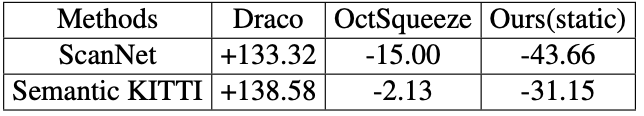

Compression rate (BDBR on %) of the proposed method for static data

Compression rate (BDBR on %) of the proposed method for static data

The proposed VoxelContext-Net also outperforms other baseline methods on dynamic point cloud compression.

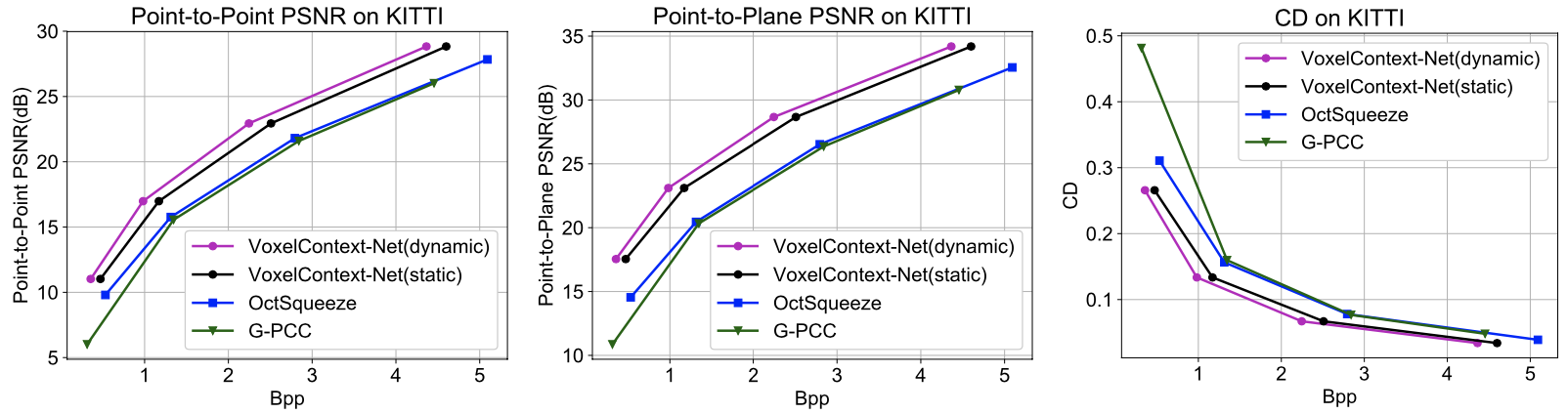

Dynamic point cloud reconstruction quality of the proposed method

Dynamic point cloud reconstruction quality of the proposed method

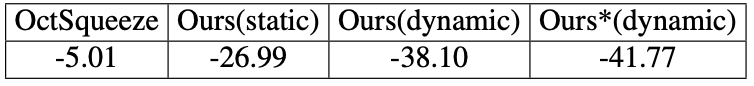

Compression rate (BDBR on %) of the proposed method for dynamic data

Compression rate (BDBR on %) of the proposed method for dynamic data

Further experimental results, including ablation study, computational complexity, and downstream task performance, are referred to the original paper.