Significance

LLMs can zero-shot forecast the future

Review

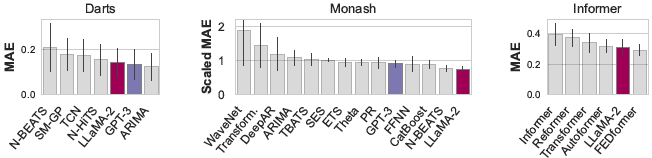

Since large language models (LLM) are capable of various zero-shot tasks, timeseries forecasting is one of the possible areas of application. The authors propose LLMTime and demonstrate that LLMs can be leveraged for the timeseries forecasting task without extra fine-tuning. The key features of LLMTime include carefully designed tokenization with added spaces, rescaling, and sampling/forecasting. LLMTime with GPT-3 and LLaMA2 achieves competitive zero-shot results on a number of benchmarks including Darts, Monash, and Informer.

LLMTime with GPT-3 and LLaMA2 achieves competitive zero-shot results

LLMTime with GPT-3 and LLaMA2 achieves competitive zero-shot results

The authors further investigate the special properties of LLMs that can be related to its inherent capability of forecasting the upcoming value.

Related

- Knowledge-Augmented Language Model Verification

- Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection

- Video Language Planning

- PaLI-3 Vision Language Models: Smaller, Faster, Stronger

- Empowering Psychotherapy with Large Language Models: Cognitive Distortion Detection through Diagnosis of Thought Prompting