Significance

Better RAG by self-verifying the process

Review

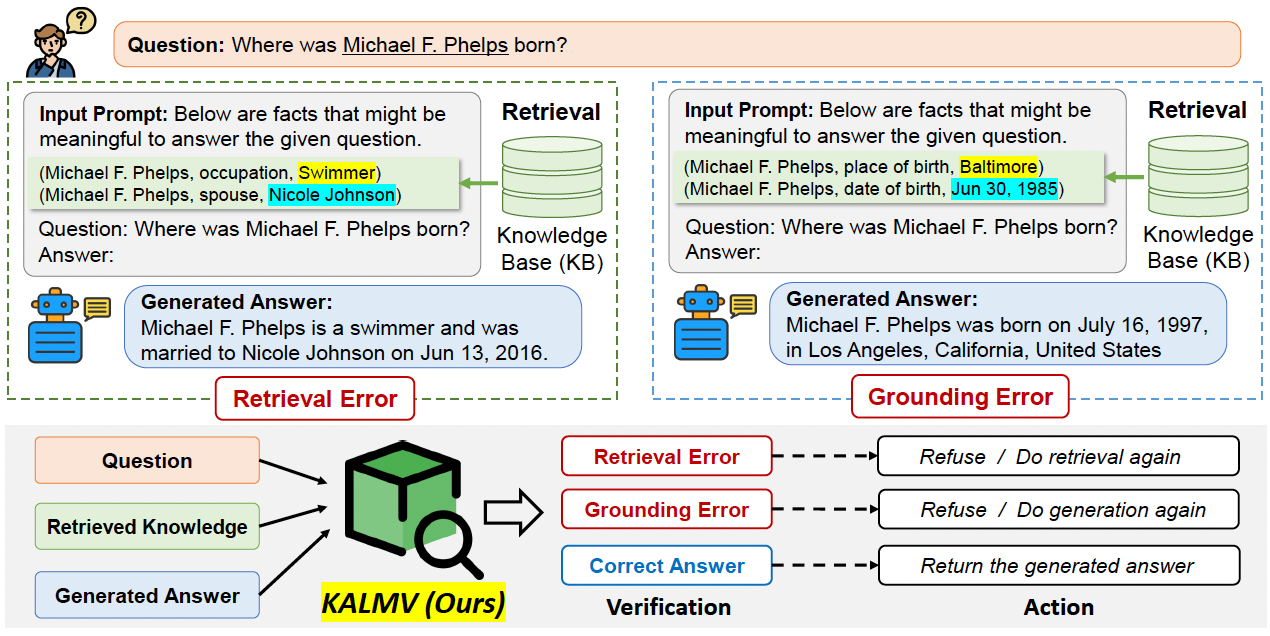

The authors propose Knowledge-Augmented Language Model Verification (KALMV), which aims to improve the quality and factuality of the retrieval augmented generation (RAG). KALMV extends the vanilla RAG with an auxiliary language model which is instruction-tuned to verify whether the 1) retrieved knowledge is correct, and 2) the generated answer is appropriately grounded to the retrieved knowledge. If the KALMV detects error in either the retrieval or generation stage, the main language model performs the retrieval-generation process again.

The KALMV

The KALMV

With the proposed KALMV, the authors show experimentally that it is effective in reducing hallucinations.

Related

- Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection

- Video Language Planning

- PaLI-3 Vision Language Models: Smaller, Faster, Stronger

- Large Language Models Are Zero-Shot Time Series Forecasters

- Empowering Psychotherapy with Large Language Models: Cognitive Distortion Detection through Diagnosis of Thought Prompting