Significance

Better RAG by self-reflecting the process

Review

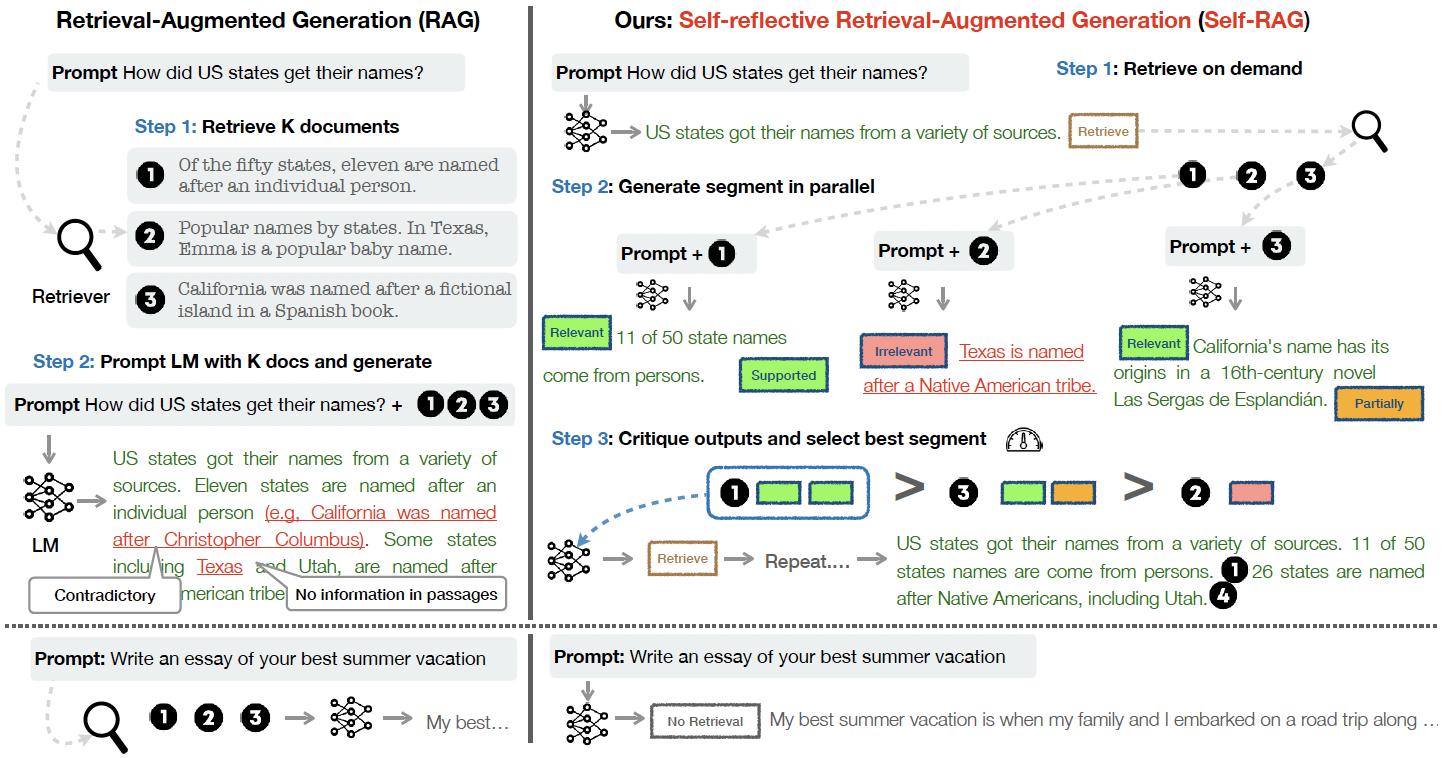

The authors propose Self-RAG, which aims to improve the quality and factuality of the retrieval augmented generation (RAG). Self-RAG extends the vanilla RAG with three-step self-reflection process, which includes retrieval, generation, and criticism. Each process is related to evaluating and retrieving relevant information, generating a number of segments in parallel, and selecting the best output segment with self-reflective critique.

The Self-RAG

The Self-RAG

The proposed Self-RAG shows promising experimental results in factuality, fluency, citation precision and recall.

Related

- Knowledge-Augmented Language Model Verification

- Video Language Planning

- PaLI-3 Vision Language Models: Smaller, Faster, Stronger

- Large Language Models Are Zero-Shot Time Series Forecasters

- Empowering Psychotherapy with Large Language Models: Cognitive Distortion Detection through Diagnosis of Thought Prompting